Optimizing TLS Record Size & Buffering Latency

TLS record size can have significant impact on the page load time performance of your application. In fact, in the worst case, which unfortunately is also the most common case on the web today, it can delay processing of received data by up to several roundtrips! On mobile networks, this can translate to hundreds of milliseconds of unnecessary latency.

TLS record size can have significant impact on the page load time performance of your application. In fact, in the worst case, which unfortunately is also the most common case on the web today, it can delay processing of received data by up to several roundtrips! On mobile networks, this can translate to hundreds of milliseconds of unnecessary latency.

The good news is, this is a relatively simple thing to fix if your TLS server supports it. However, first let's take a step back and understand the problem: what is a TLS record, why do we need it, and why does record size affect latency of our applications?

Encryption, authentication, and data integrity

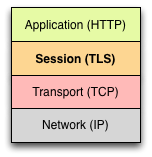

TLS provides three essential services: encryption, authentication, and data integrity. Encryption obfuscates the data, authentication provides a mechanism to verify the identity of the peer, and integrity provides a mechanism to detect message tampering and forgery.

Each TLS connection begins with a handshake where the peers negotiate the ciphersuite, establish the secret keys for the connection, and authenticate their identities (on the web, typically we only authenticate the identity of the server - e.g. is this really my bank?). Once these steps are complete, we can begin transferring application data between the client and server. This is where data integrity and record size optimization enter into the picture.

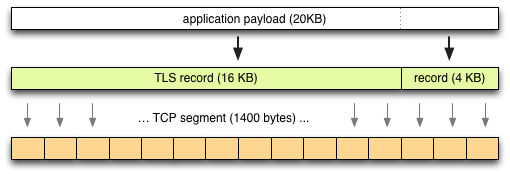

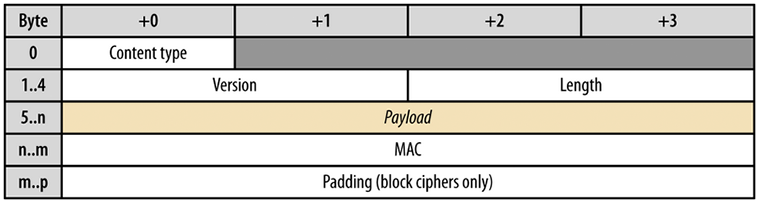

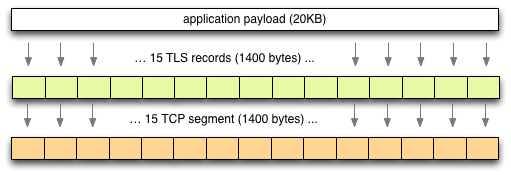

Before the application data is encrypted, it is divided into smaller chunks of up to 16KB in size and each of the resulting pieces is signed with a message authentication code (MAC). The resulting data chunk is then encrypted, and the MAC, plus some other protocol metadata forms a “TLS record”, which is then forwarded to the peer:

The receiver reverses the sequence. First, the bytes are aggregated until one or more complete records are in the buffer (as above diagram shows, each record specifies its length in the header), and once the entire record is available, the payload is then decrypted, the MAC is computed once more for the decrypted data, and is finally verified against the MAC contained in the record. If the two hashes match, data integrity is assured, and TLS finally releases the data to the application above it.

Head-of-line blocking, TLS records, and latency

TLS runs over TCP, and TCP promises in order delivery of all transferred packets. As a result, TCP suffers from head-of-line (HOL) blocking where a lost packet may hold all other received packets in the buffer until it is successfully retransmitted - otherwise, the packets would be delivered out of order! This is a well-known tradeoff for any protocol that runs on top of a reliable and in-order transport like TCP.

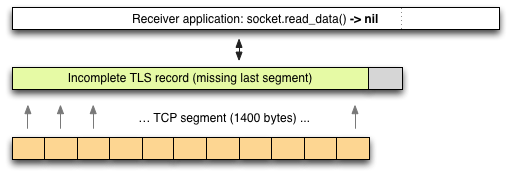

However, in case of TLS, we have an extra layer of buffering due to the integrity checks! Once TCP delivers the packets to the TLS layer above it, we must first accumulate the entire record, then verify its MAC checksum, and only when that passes, can we release the data to the application above it. As a result, if the server emits data in 16KB record chunks, then the receiver must also read data 16KB at a time.

In other words, even if the receiver has 15KB of the record in the buffer and is waiting for the last packet to complete the 16KB record, the application can't read it until the entire record is received and the MAC is verified - therein lies our latency problem! If any packet is lost, we will incur a minimum of an additional RTT to retransmit it. Similarly, if the record happens to exceed the current TCP congestion window, then it will take a minimum of two RTTs before the application can process sent data.

Eliminating TLS latency

The larger the TLS record size, the higher the likelihood that we may incur an additional roundtrip due to a TCP retransmission or "overflow" of the congestion window. That said, the fix is also relatively simple: send smaller records. In fact, to eliminate this problem entirely, configure your TLS record size to fit into a single TCP segment.

If the TCP congestion window is small (i.e. during slow-start), or if we're sending interactive data that should be processed as soon as possible (in other words, most HTTP traffic), then small record size helps mitigate costly latency overhead of yet another layer of buffering.

Configuring your TLS server

The bad news is that many TLS servers do not provide an easy way to configure TLS record size and instead use the default maximum of 16 KB. The good news is, if you are using HAProxy to terminate TLS, then you are in luck, and otherwise, you may need to fiddle with the source of your server (assuming it is open source):

- HAProxy exposes tune.ssl.maxrecord as a config option.

- Nginx hardcodes 16KB size in ngx_event_openssl, which you can change and recompile from source.

- For other servers, check the documentation!

Web browsing is latency bound and eliminating extra roundtrips is critical for delivering better performance. All Google servers are configured to begin new connections with TLS records that fit into a single network segment - it is critical to get useful data to the client as quickly as possible. Your server should do so as well!

P.S. For more TLS optimization tips, check out the TLS chapter in High Performance Browser Networking.

Ilya Grigorik is a web ecosystem engineer, author of High Performance Browser Networking (O'Reilly), and Principal Engineer at Shopify — follow on

Ilya Grigorik is a web ecosystem engineer, author of High Performance Browser Networking (O'Reilly), and Principal Engineer at Shopify — follow on