Automating Web Performance with mod_pagespeed

One of the best investments any development team can make is towards better tooling and automation of common workflows. Turns out, many web performance best practices are prime candidates for this type of automation: preprocessing, minification, concatenating and spriting assets, optimizing images, and so on.

One of the best investments any development team can make is towards better tooling and automation of common workflows. Turns out, many web performance best practices are prime candidates for this type of automation: preprocessing, minification, concatenating and spriting assets, optimizing images, and so on.

One way to achieve this is through a build step prior to every deploy, which works, as long as you control all the assets and the release process. But, what if your product is a platform where new content is being uploaded, replaced, and modified on the fly by your users? That's where an optimizing proxy, or a smart web server can do wonders - no build step required, yet you can still guarantee an optimized experience to your visitors. How would you build such a proxy?

Apache and PageSpeed Optimization Libraries

mod_pagespeed is an open source Apache module designed to automate all of the popular web performance best practices: minification, inlining, spriting, image optimization, concatenating files, sharding, and the list goes on. Earlier today, the team announced the 1.0 release of the module, which includes over 40 optimization filters, and an install base of over 120K websites - nice milestone.

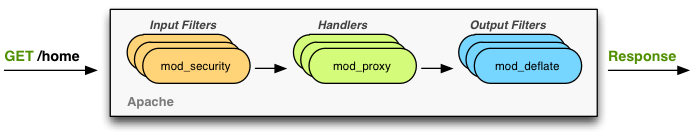

Turns out, mod_pagespeed itself is powered by another open-source project: PageSpeed Optimization Libraries (PSOL), which is a server-independent framework and a collection of C++ classes for optimizing web pages and its associated assets. To understand how PSOL and Apache work together, it is first worth taking a quick look at the high-level Apache architecture:

Every request served by Apache follows the same worfklow. First, one or more input filters can be applied: authentication, security filters (mod_security), checking or modifying the incoming headers, and so on. Next, one or more handlers takes the incoming request and generates output: a PHP handler can intercept all requests ending with .php, or a request can be proxied to a local or a remote app server (mod_proxy). Finally, once the output bytes are available, one or more output filters can be applied: compression (mod_deflate), output headers, and so on. Apache provides well defined API's for each of the stages, which enables a very powerful plugin architecture.

Optimizing on the fly with mod_pagespeed

To optimize content served by Apache, mod_pagespeed installs an output filter which looks for HTML content. When an HTML file is detected, it is stream-parsed and a variety of filters can be applied to the output: the simplest filter may remove unused whitespace, or unnecessary quotes and comments, and more complex filters can rewrite the HTML by changing the order of resources, or replacing the resources entirely!

<!-- original source -->

<link rel="stylesheet" type="text/css" href="styles/yellow.css">

<link rel="stylesheet" type="text/css" href="styles/blue.css">

<link rel="stylesheet" type="text/css" href="styles/big.css">

<link rel="stylesheet" type="text/css" href="styles/bold.css">

<!-- rewritten resource -->

<link rel="stylesheet" type="text/css"

href="styles/yellow.css+blue.css+big.css+bold.css.pagespeed.cc.HASH.css">

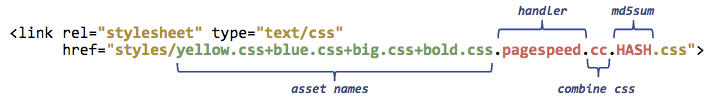

<div class="blue yellow big bold">Hello, mod_pagespeed!</div>In the example above, mod_pagespeed detected that we are requiring multiple CSS resources from the same host and replaced it with a combined CSS file. The constructed filename provides a lot of information:

Concatenating the resources into one file is easy, but mod_pagespeed goes a lot further, as it allows you to customize the minimum and maximum size for each asset, and also provides an md5sum of the optimized asset - to do so, it has to actually fetch and process each of the files. The md5sum in the filename is the critical piece which allows for transparent optimization: whenever one of the files is changed, the filename of the optimized asset will be automatically updated. This also allows the optimized asset to be served with a far future expires.

Optimizing assets with PageSpeed Handler

Once the output filter rewrites the HTML with optimized asset urls, that's where the custom PageSpeed handler comes into play: it inspects the URL's for incoming assets, and intercepts all .pagespeed. resources. As we saw in the CSS example above, the URL itself tells the handler all it needs to know to generate the optimized asset.

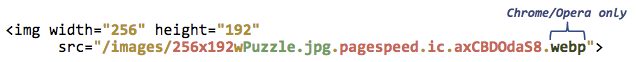

In similar fashion, image links are rewritten by the output filter and processed by the handler. Except that, the image filter has many more built-in optimizations: it can resize images on the fly to the specified dimensions, strip unused meta-data, and even recompress and re-encode images to different formats. Serving a PNG which is better encoded as a JPEG? The handler will attempt the conversion and serve the JPEG if there is a significant filesize difference. You can also specify a custom image quality target to further reduce the size of the images.

Best of all, because the image optimization is done with information about the client, the server can dynamically choose the optimal format - Chrome and Opera support WebP, which yields 30% improvement over JPEG, so a Firefox visitor may recieve a JPEG, whereas a Chrome or an Opera visitor may receive a smaller WebP asset.

Efficiency and Performance

Performing asset optimization on the fly takes server resources. To address this, mod_pagespeed ships with a cache for optimized resources to ensure that each asset is only optimized once. Further, all of the optimizations are done asynchronously from the request, meaning that the first request is not penalized in terms of performance - the first visitor may receive the unoptimized asset, but the rest will be served out of the cache.

Overall, it is a simple and a clever architecture, but also definitely one with many different moving components. Getting all the implementation details, toggles, and configuration flags right is no small task. If you are curious to learn more about the history, architecture, and the machinery underneath mod_pagespeed, check out the GDL episode with Joshua Marantz, the tech lead on the project at Google:

Automating Web Performance

One of the best features of the mod_pagespeed project is that the core functionality has been extracted into the standalone PSOL libraries. The Apache module is simply a server specific implementation for the Apache architecture, and if you have a custom server then you can leverage all of the hard work and the existing filters built by the team by embedding PSOL into your own project.

In fact, EdgeCast Networks integrated PSOL and mod_pagespeed into its CDN product earlier in the year, and more recently, the PageSpeed team kicked off an experimental implementation of the ngx_pagespeed module for the nginx server. If you are an nginx user, or a developer, definitely check it out and contribute. It would be awesome to see the PageSpeed module available across all the popular servers!

Ilya Grigorik is a web ecosystem engineer, author of High Performance Browser Networking (O'Reilly), and Principal Engineer at Shopify — follow on

Ilya Grigorik is a web ecosystem engineer, author of High Performance Browser Networking (O'Reilly), and Principal Engineer at Shopify — follow on